A.I.s, singularity, brain transfer... your thoughts?

A.I.s, singularity, brain transfer... your thoughts?

Made a post on the UFO topic and mentioned AIs.

What are your thoughts about singularity, AIs? Do you think there is a valid reason to fear AIs or AIs trying to suplant humans is just a thing of science fiction. What if we create AIs that are intelligent enough to improve themselves (or create another more intelligent AI), which will do the same again, leading to the so called technological singularity?

Or maybe you think that future brain implants, mind upload and other such techniques will invalidate any kind of distinction between human mind and AIs?

What are your thoughts about singularity, AIs? Do you think there is a valid reason to fear AIs or AIs trying to suplant humans is just a thing of science fiction. What if we create AIs that are intelligent enough to improve themselves (or create another more intelligent AI), which will do the same again, leading to the so called technological singularity?

Or maybe you think that future brain implants, mind upload and other such techniques will invalidate any kind of distinction between human mind and AIs?

OK, a lot of topics here.

1. I am not so scared of AIs. For decades now we have been served with the technological boogy man of the AI that will rule us all. It still seems as far away today as it did when they made the movie colossus.

http://www.imdb.com/title/tt0064177/

I remember reading about walking robots in the early 80ies. Robots are still walking at a pace that does not scare me. Ok, the big dog is kinda scary, but not THAT scary either.

Seeing the progress we have made in the past 30 years, I am not too scared yet.

New technologies have often been challenging for older people. This has not changed. But then my 60 year old mum can use facebook and Skype and she really never had any interest in computers at all.

2. Tranfering a consciousness into a computer is plain outright silly. It completely neglects the fact that our bodies affect brain functionality in many ways which in turn affects personality. Lots of testosterone, e.g or the lack thereof... No testies, not testosterone... There will be lots of very tame male "computerpeople"

That is not even mentioning the fact that the computer will only house a clone of your consciousness. Your real consciouness will die with your body, snip, lights out!

So, naaa I doubt that this will work. I think that lab grown replacement organs, stem cells and genetic engineering are the better bet.

1. I am not so scared of AIs. For decades now we have been served with the technological boogy man of the AI that will rule us all. It still seems as far away today as it did when they made the movie colossus.

http://www.imdb.com/title/tt0064177/

I remember reading about walking robots in the early 80ies. Robots are still walking at a pace that does not scare me. Ok, the big dog is kinda scary, but not THAT scary either.

Seeing the progress we have made in the past 30 years, I am not too scared yet.

New technologies have often been challenging for older people. This has not changed. But then my 60 year old mum can use facebook and Skype and she really never had any interest in computers at all.

2. Tranfering a consciousness into a computer is plain outright silly. It completely neglects the fact that our bodies affect brain functionality in many ways which in turn affects personality. Lots of testosterone, e.g or the lack thereof... No testies, not testosterone... There will be lots of very tame male "computerpeople"

That is not even mentioning the fact that the computer will only house a clone of your consciousness. Your real consciouness will die with your body, snip, lights out!

So, naaa I doubt that this will work. I think that lab grown replacement organs, stem cells and genetic engineering are the better bet.

-

CaptainBeowulf

- Posts: 498

- Joined: Sat Nov 07, 2009 12:35 am

Singularity theory = possible, but not certain.

Elements of a singularity seem to be appearing. Open source group designing an open source machine that can print solar cells? Sounds like a primitive forerunner of a "cornucopia machine."

There are already oddballs wandering around with primitive cybernetic implants. Personally, I don't see how they avoid getting infections or scar tissue build up around the places they've inserted stuff through their flesh, but to each his (or her) own.

The internet is a sort of primitive worldwide neural network, although right now we mostly use it to insult and mock each other, watch p0rn, spread our weird pet theories... but there is occasionally genuinely useful discussion that just would never have happened in more traditional fora.

There are attempts to make autonomous machines, but not sentient ones. So, "big dog," DARPA desert race SUVs, autonomous drones... maybe analogous to worms or insects - they don't really "think," they react to stimuli according to a preprogrammed set of hard-wired responses... but, after all, that's the evolutionary precursor to thought.

The complexity theory part of singularity theory seems right to me. Although evolutionary biologists these days are very careful to say that there's no such thing as "progress" or "higher forms" of life vs "lower forms," there does seem to be a consistent pattern of more complex neural networks.

For instance, the Cambrian explosion animals included lots of very interesting and well-adapted body plans, but little in the way of brains that did more than react to immediate stimuli. After a couple of mass extinctions you got dinosaurs and other orders of life that co-existed with them. In most respects, as complex an ecosystem as has ever existed since, with some forms that were probably better adapted to their niches than anything around today. Nonetheless, the best estimations are that a tyrannosaur was probably on par with a chicken in terms of intelligence, and that "Jurassic Park" movie velociraptors are pure fantasy. They and closely related species were probably the smartest dinosaurs, but this means that they actually might have been as intelligent as a middling species of predatory bird around today - probably not as smart as crows or owls.

Then you get the age of mammals, and you get a lot of really smart mammals. Pack hunting wolves are a lot smarter than chicken-brained predatory dinosaurs. Dolphins and whales have a body plan similar to ichthyosaurs and plesiosaurs, but much larger brains. Elephants overlap with suropods in some aspects of biological niche and convergent evolution body structure, but are much smarter. Some birds, like owls and crows, have gotten smarter. Finally, you got monkeys, apes, and then us.

There are individual examples where a certain lineage may lead from a smarter species to a dumber species, but overall, each grand epoch seems to have favored a large number of species having more complex neural networks.

Then, there's our development. Sometime between 1.5 million to 100,000 years ago we get fire. Around the same time we start making primitive stone tools, which gradually get more complex. At some unknown point we start using language, and by 50,000 to 100,000 years ago there's some abstract thought: both neanderthals and modern homo sapiens are burying their dead and possibly painting the bodies with red ochre or other things. Domesticating wolves into dogs possibly starts around here as well.

Assuming that there's no lost Atlantis style civilization, 10,000 years ago we really get going with farming and domesticating lots of other animals. Then - iron age, bronze age, classical age... periods that we sometimes call "dark ages." Even during the medieval period, though, new technologies spread: windmills were perfected and widely disseminated; water mills became much more widely disseminated; the mouldboard plow was developed which allowed intense agriculture in northern Europe (which was impossible in the classical period); stirrups were either invented or became widely disseminated, allowing much more effective cavalry techniques; the Chinese fully developed gunpowder and the Turks learned to use it to make primitive cannons, and so on.

Then, of course, the Renaissance, Enlightenment, industrial revolution - now ideas based on new physical principles are rapidly exploited within decades. Wright brothers invent aircraft in 1903 - B-29s by 1945. Jet engines invented in the late 1930s/early 1940s - F-15s by the 1980s. At this point point, things like F-35s are just tweaking and finessing the state of the art a bit... because most of the potential has already been exploited.

Similarly, Bell Labs produces the transistor in the 1950s; transistor radios in the 1960s, primitive PGMs in the late 60s/early 70s; embedded electronics for flight control etc. by the late 70s; first personal computers around 1980; Pentiums by 1995; Ultrabooks, quad-core workstations with SSD hard drives and smartphones by 2012.

The large amount of computing power and communications bandwidth seems to make it feasible that mental and technological complexity will continue to increase exponentially for at least a little while longer.

All that said, it's still a big leap to possibly uploading a mind from a brain into a computer, or from a computer to a cloned body. We really don't know yet if that's possible.

We also don't know if we can really program something to be self-aware, or whether it will still just be an automaton feigning self-awareness because it's been programmed to act that way.

If it all turns out to be technologically/biologically possible, then I suppose it depends on how we treat each other. If we make truly sentient machines but treat them as slaves, they probably will rebel. If some people upload their minds/genetically alter themselves to not grow old/turn themselves into cyborgs... on the one hand, it depends on whether any of them decide they want to force their way of life on others, and on the other hand... it depends on whether the general population decides that they're monsters and tries to destroy them. A human-machine war could happen, but isn't guaranteed.

If a whole pile of minds (whether AIs or uploads or a combination of both) merge together and create an AI that is so smart that it starts to continually rebuild itself to be better, it might decide to just turn everything else on the planet into raw materials. Or, it might well still inherit a moral compass from its precursor minds, in which case it may well decide to just build a large spaceship "body" for itself and leave. That way the primitives can continue to live their lives in terms that they can understand while it can go about comprehending the universe.

In his book Singularity Sky Charles Stross proposes an interesting singularity called the Eschaton, which does decide to leave, but for reasons known best to itself also decides to remove most of the human population from Earth and instantaneously transport them through small wormholes to a few hundred other planets in a 5000 light year radius around Earth. It then ignores them, except to intervene rather malevolently when civilizations attempt to use FTL drives to violate causality. It's not above making a sun go supernova to take out a regime that's bent on starting a timewar. Seems as likely to me as any other scenario.

Elements of a singularity seem to be appearing. Open source group designing an open source machine that can print solar cells? Sounds like a primitive forerunner of a "cornucopia machine."

There are already oddballs wandering around with primitive cybernetic implants. Personally, I don't see how they avoid getting infections or scar tissue build up around the places they've inserted stuff through their flesh, but to each his (or her) own.

The internet is a sort of primitive worldwide neural network, although right now we mostly use it to insult and mock each other, watch p0rn, spread our weird pet theories... but there is occasionally genuinely useful discussion that just would never have happened in more traditional fora.

There are attempts to make autonomous machines, but not sentient ones. So, "big dog," DARPA desert race SUVs, autonomous drones... maybe analogous to worms or insects - they don't really "think," they react to stimuli according to a preprogrammed set of hard-wired responses... but, after all, that's the evolutionary precursor to thought.

The complexity theory part of singularity theory seems right to me. Although evolutionary biologists these days are very careful to say that there's no such thing as "progress" or "higher forms" of life vs "lower forms," there does seem to be a consistent pattern of more complex neural networks.

For instance, the Cambrian explosion animals included lots of very interesting and well-adapted body plans, but little in the way of brains that did more than react to immediate stimuli. After a couple of mass extinctions you got dinosaurs and other orders of life that co-existed with them. In most respects, as complex an ecosystem as has ever existed since, with some forms that were probably better adapted to their niches than anything around today. Nonetheless, the best estimations are that a tyrannosaur was probably on par with a chicken in terms of intelligence, and that "Jurassic Park" movie velociraptors are pure fantasy. They and closely related species were probably the smartest dinosaurs, but this means that they actually might have been as intelligent as a middling species of predatory bird around today - probably not as smart as crows or owls.

Then you get the age of mammals, and you get a lot of really smart mammals. Pack hunting wolves are a lot smarter than chicken-brained predatory dinosaurs. Dolphins and whales have a body plan similar to ichthyosaurs and plesiosaurs, but much larger brains. Elephants overlap with suropods in some aspects of biological niche and convergent evolution body structure, but are much smarter. Some birds, like owls and crows, have gotten smarter. Finally, you got monkeys, apes, and then us.

There are individual examples where a certain lineage may lead from a smarter species to a dumber species, but overall, each grand epoch seems to have favored a large number of species having more complex neural networks.

Then, there's our development. Sometime between 1.5 million to 100,000 years ago we get fire. Around the same time we start making primitive stone tools, which gradually get more complex. At some unknown point we start using language, and by 50,000 to 100,000 years ago there's some abstract thought: both neanderthals and modern homo sapiens are burying their dead and possibly painting the bodies with red ochre or other things. Domesticating wolves into dogs possibly starts around here as well.

Assuming that there's no lost Atlantis style civilization, 10,000 years ago we really get going with farming and domesticating lots of other animals. Then - iron age, bronze age, classical age... periods that we sometimes call "dark ages." Even during the medieval period, though, new technologies spread: windmills were perfected and widely disseminated; water mills became much more widely disseminated; the mouldboard plow was developed which allowed intense agriculture in northern Europe (which was impossible in the classical period); stirrups were either invented or became widely disseminated, allowing much more effective cavalry techniques; the Chinese fully developed gunpowder and the Turks learned to use it to make primitive cannons, and so on.

Then, of course, the Renaissance, Enlightenment, industrial revolution - now ideas based on new physical principles are rapidly exploited within decades. Wright brothers invent aircraft in 1903 - B-29s by 1945. Jet engines invented in the late 1930s/early 1940s - F-15s by the 1980s. At this point point, things like F-35s are just tweaking and finessing the state of the art a bit... because most of the potential has already been exploited.

Similarly, Bell Labs produces the transistor in the 1950s; transistor radios in the 1960s, primitive PGMs in the late 60s/early 70s; embedded electronics for flight control etc. by the late 70s; first personal computers around 1980; Pentiums by 1995; Ultrabooks, quad-core workstations with SSD hard drives and smartphones by 2012.

The large amount of computing power and communications bandwidth seems to make it feasible that mental and technological complexity will continue to increase exponentially for at least a little while longer.

All that said, it's still a big leap to possibly uploading a mind from a brain into a computer, or from a computer to a cloned body. We really don't know yet if that's possible.

We also don't know if we can really program something to be self-aware, or whether it will still just be an automaton feigning self-awareness because it's been programmed to act that way.

If it all turns out to be technologically/biologically possible, then I suppose it depends on how we treat each other. If we make truly sentient machines but treat them as slaves, they probably will rebel. If some people upload their minds/genetically alter themselves to not grow old/turn themselves into cyborgs... on the one hand, it depends on whether any of them decide they want to force their way of life on others, and on the other hand... it depends on whether the general population decides that they're monsters and tries to destroy them. A human-machine war could happen, but isn't guaranteed.

If a whole pile of minds (whether AIs or uploads or a combination of both) merge together and create an AI that is so smart that it starts to continually rebuild itself to be better, it might decide to just turn everything else on the planet into raw materials. Or, it might well still inherit a moral compass from its precursor minds, in which case it may well decide to just build a large spaceship "body" for itself and leave. That way the primitives can continue to live their lives in terms that they can understand while it can go about comprehending the universe.

In his book Singularity Sky Charles Stross proposes an interesting singularity called the Eschaton, which does decide to leave, but for reasons known best to itself also decides to remove most of the human population from Earth and instantaneously transport them through small wormholes to a few hundred other planets in a 5000 light year radius around Earth. It then ignores them, except to intervene rather malevolently when civilizations attempt to use FTL drives to violate causality. It's not above making a sun go supernova to take out a regime that's bent on starting a timewar. Seems as likely to me as any other scenario.

Re: A.I.s, singularity, brain transfer... your thoughts?

I suspect that Jeff hawkins' concepts are correct - it will be far easier to construct and "train up" an AI than "upload" a human mind. In point of fact, it has probably been doable for several years now.AcesHigh wrote:Made a post on the UFO topic and mentioned AIs.

What are your thoughts about singularity, AIs? Do you think there is a valid reason to fear AIs or AIs trying to suplant humans is just a thing of science fiction. What if we create AIs that are intelligent enough to improve themselves (or create another more intelligent AI), which will do the same again, leading to the so called technological singularity?

Or maybe you think that future brain implants, mind upload and other such techniques will invalidate any kind of distinction between human mind and AIs?

http://en.wikipedia.org/wiki/On_Intelligence

http://en.wikipedia.org/wiki/Memory-pre ... _framework

Hawkins posits that the seat of intelligence - the cortex - is VERY generic in structure. In perceives the world based on the nature of the sensory electrical signals fed into it from its earliest days. This is why children raised in darkness for their first few months or years are blind forever - the brain does not build up the ability to interpret visual electrical signals in its first few years. Feed different types of "sensory" signals into the artificial cortex of an "infant" AI, and it may well grow up to perceive the world in ways you, I and all animal life on this planet would be unable to comprehend. OTOH, you can use such "biases" in sensory feed to train up a near-perfect weather or fusion-process predictor AI. Preferred AIs may well be artificial autistic savants - VERY good at what they are trained to. Alternatively, the AIs trained up to fly combat UAVs would be "trained" similarly to police dogs.

Lastly, such an AI is only dangerous if the synthetic brain is constructed or digitally modeled with the emotive parts of the human brain "built in." Fail to include those, and there is no possibility of an existential threat ala Skynet. The emotive parts of the brain control ego, fight-flight, and sense of self. None of that, no possibility of Skynet. And none of those are strictly necessary for intellect - which is what cortex is for.

Vae Victis

Thanks Djolds (and others). Very interesting.

I have some similar ideas to what you said. Our intelligence is too much dependent on our evolutionary history, as well as our life experience.

psychologists that deal with problematic children know very well how much parent behavior influence their children development. Stuff that we all do to our children (almost uncounciously), playing, that help the child get self aware of its own body ("I").

But anyway, the stimuli the child receive from the world around it, is still superimposed over a brain with plenty of almost hardwired stuff that makes we humans and other much older stuff, instincts and such that exist in most animals, some things that exist much earlier too.

Like for example curiosity (probably an evolutionary solution to help understand the environment and search for survival options), reproduction (not only the will to multiply our genes as also to PROTECT the offspring).

But above all, survival instinct. This instinct is molded into animals because obviously, living beings who fought the most to survive had more chance to multiply and pass that trait.

I dont think these instincts can easily be programed into an AI. Humans would need create a virtual accelerated evolution, with programs evolving and trying to survive and incorporate these complex created patterned programs in some base of the AI personality.

Sincerely, an AI is not dangerous imho if it does not truly has curiosity to do things, a will do dominate its group, to override commands in contrary, and a self defense, survival instinct, to avoid being shut down, or plan to avoid being shut down (even very long term intelligent thinking)

Now of course, we might think: and who the hell would try to implant a survival instinct in a superintelligent AI, which would make it AVOID being shut down by humans, feel threatened and plan to respond?

Well, who knows. A doomsday group trying to fullfill its own prophecy? Terrorists? Singularity enthusiasts? Its entirely possible imho, to create these dangerous AIs... but ALWAYS ON PURPOSE. What I dont think is that an AI just by being self conscious will be of any danger.

You could very well have an ultra intelligent AI, that can discover the meaning of the universe, calculate probabilities like Isaac Asimov´s psychohistory, discover humans are a threat to its existance or to the universe itself in the distant future, and go like: "who frick cares? I rather be dead than be aware anyway".

I have some similar ideas to what you said. Our intelligence is too much dependent on our evolutionary history, as well as our life experience.

psychologists that deal with problematic children know very well how much parent behavior influence their children development. Stuff that we all do to our children (almost uncounciously), playing, that help the child get self aware of its own body ("I").

But anyway, the stimuli the child receive from the world around it, is still superimposed over a brain with plenty of almost hardwired stuff that makes we humans and other much older stuff, instincts and such that exist in most animals, some things that exist much earlier too.

Like for example curiosity (probably an evolutionary solution to help understand the environment and search for survival options), reproduction (not only the will to multiply our genes as also to PROTECT the offspring).

But above all, survival instinct. This instinct is molded into animals because obviously, living beings who fought the most to survive had more chance to multiply and pass that trait.

I dont think these instincts can easily be programed into an AI. Humans would need create a virtual accelerated evolution, with programs evolving and trying to survive and incorporate these complex created patterned programs in some base of the AI personality.

Sincerely, an AI is not dangerous imho if it does not truly has curiosity to do things, a will do dominate its group, to override commands in contrary, and a self defense, survival instinct, to avoid being shut down, or plan to avoid being shut down (even very long term intelligent thinking)

Now of course, we might think: and who the hell would try to implant a survival instinct in a superintelligent AI, which would make it AVOID being shut down by humans, feel threatened and plan to respond?

Well, who knows. A doomsday group trying to fullfill its own prophecy? Terrorists? Singularity enthusiasts? Its entirely possible imho, to create these dangerous AIs... but ALWAYS ON PURPOSE. What I dont think is that an AI just by being self conscious will be of any danger.

You could very well have an ultra intelligent AI, that can discover the meaning of the universe, calculate probabilities like Isaac Asimov´s psychohistory, discover humans are a threat to its existance or to the universe itself in the distant future, and go like: "who frick cares? I rather be dead than be aware anyway".

Wrapping your mind around a synthetic copy

The brain is in the end just a very complex network.

A network that is partly founded on rules set by genetics, partly set by biology and finally experience.

At this time, the acceptance of the possibility of mind-upload is blocked by the general public by setting an impossible requirement.

1:1 emulation. We don't consider the mind as being 'uploaded' if it's not perfectly the same.

I've let go of this notion.

When we consider how vastly different our brain works when simple exposed by our body's own chemicals, little alone EXTERNAL chemicals, the very notion of establishing what our original brain should work like is simply said, silly.

Then we look at actual neurological problems, people coming back after severe brain damage due to bleeding. Shortage of food, stress.

Because the change is gradual, we keep saying it is still thesame person.

It takes a snapshot impression to find out the actual change, and even then the likeness of the person will bias our impression.

Throughout our lives we become different persons, connected by memories of who they all were, we do not perceive this as such, but we are constantly changed in small ways.

Now back to mind-upload. I am certain that 1:1 emulation is unobtainable. And I believe this should not matter.

Every action preformed on our brain, influences it. Some influences are more severe then others. For instance, the very act of releasing a chemical into your brain to increase it's longevity will influence it. Not only in the intended way, but in many tiny ways, it influences other biological processes. We hardly consider this. Because this lies in the range of changes we are exposed to on a daily basis.

Brain-Upload before understanding it

If I wait until we fully understand the complete working of the brain before I engage in trying to 'upload' my brain, it will have decomposed into it's separate minerals and probably has become part of different parts of flora and fauna. There won't be much left to 'upload'.

So I use the same tactic I use when I need to transfer my computer to a new system: I backup as much as possible in the hopes I have everything available should I find out I forgot something later on.

The same can be done with making a backup of a brain.

Data, heaps and heaps of data.

It is my understanding that at the moment, MRI scans are done with the focus on time efficiency. If you suggest that one slice should be scanned 5 times, doctors will tap their watch and ask you if you have any idea how expensive this would be.

I don't believe super-resolution techniques are used in the scanning process. So there seem to be improvements to be made in existing methods.

There are some novel ways in which MEG and EEG scans can be combined. Being able to preform multiple tests simultaneously using preset sensory inputs will at least offer a possible way to use the synchronised data to interpret them in a possible 'solution'. Where the 'solution' is the origin of the received data.

With some creativity, it's like comparing this with CERN's LHC, the ATLAS to be precise. You try to catch as much data as possible from the brain, and hope to use your knowledge of the brain to retrace the data to a plausible explanation to why that data was produced using a point of reverence.

By establishing the neural landscape, and collecting signals from this landscape, I hope to establish a model that would explain the relation between these two sets of data.

It won't be the original, but an approximation which could get better as we establish better models of the actual brain and our tools for measuring become better.

For people today we are stuck with the data collected now, and will just have to hope enough is being collected to compensate for the lack of detail.

Because some data is better then no data, no amount of knowledge can change an absence of data into a approximation of your brain.

This is what I plan to do when I find time and the right people.

EDIT: spelling

The brain is in the end just a very complex network.

A network that is partly founded on rules set by genetics, partly set by biology and finally experience.

At this time, the acceptance of the possibility of mind-upload is blocked by the general public by setting an impossible requirement.

1:1 emulation. We don't consider the mind as being 'uploaded' if it's not perfectly the same.

I've let go of this notion.

When we consider how vastly different our brain works when simple exposed by our body's own chemicals, little alone EXTERNAL chemicals, the very notion of establishing what our original brain should work like is simply said, silly.

Then we look at actual neurological problems, people coming back after severe brain damage due to bleeding. Shortage of food, stress.

Because the change is gradual, we keep saying it is still thesame person.

It takes a snapshot impression to find out the actual change, and even then the likeness of the person will bias our impression.

Throughout our lives we become different persons, connected by memories of who they all were, we do not perceive this as such, but we are constantly changed in small ways.

Now back to mind-upload. I am certain that 1:1 emulation is unobtainable. And I believe this should not matter.

Every action preformed on our brain, influences it. Some influences are more severe then others. For instance, the very act of releasing a chemical into your brain to increase it's longevity will influence it. Not only in the intended way, but in many tiny ways, it influences other biological processes. We hardly consider this. Because this lies in the range of changes we are exposed to on a daily basis.

Brain-Upload before understanding it

If I wait until we fully understand the complete working of the brain before I engage in trying to 'upload' my brain, it will have decomposed into it's separate minerals and probably has become part of different parts of flora and fauna. There won't be much left to 'upload'.

So I use the same tactic I use when I need to transfer my computer to a new system: I backup as much as possible in the hopes I have everything available should I find out I forgot something later on.

The same can be done with making a backup of a brain.

Data, heaps and heaps of data.

It is my understanding that at the moment, MRI scans are done with the focus on time efficiency. If you suggest that one slice should be scanned 5 times, doctors will tap their watch and ask you if you have any idea how expensive this would be.

I don't believe super-resolution techniques are used in the scanning process. So there seem to be improvements to be made in existing methods.

There are some novel ways in which MEG and EEG scans can be combined. Being able to preform multiple tests simultaneously using preset sensory inputs will at least offer a possible way to use the synchronised data to interpret them in a possible 'solution'. Where the 'solution' is the origin of the received data.

With some creativity, it's like comparing this with CERN's LHC, the ATLAS to be precise. You try to catch as much data as possible from the brain, and hope to use your knowledge of the brain to retrace the data to a plausible explanation to why that data was produced using a point of reverence.

By establishing the neural landscape, and collecting signals from this landscape, I hope to establish a model that would explain the relation between these two sets of data.

It won't be the original, but an approximation which could get better as we establish better models of the actual brain and our tools for measuring become better.

For people today we are stuck with the data collected now, and will just have to hope enough is being collected to compensate for the lack of detail.

Because some data is better then no data, no amount of knowledge can change an absence of data into a approximation of your brain.

This is what I plan to do when I find time and the right people.

EDIT: spelling

-

paperburn1

- Posts: 2488

- Joined: Fri Jun 19, 2009 5:53 am

- Location: Third rock from the sun.

I've been in the "transhumanist" community for the past 25 years (actually helped to create it). I know the emphasis the self-described transhumanists have on AI and uploading. These are theoretically possible. But I don't expect to see them in the next 50 years.

I'm more of an 80's style transhumanist, which is described on NBF as the "mundane" singularity. I expect SENS and other forms of radical life extension to be available in the coming decades, along with synthetic biology and bio-engineering in general. 3D printing/additive manufacturing will also progress during this time. Same for robotics and general automation. The wild cards, which are looking increasingly likely, are fusion (plasma and LENR) and the Woodward-Mach thing. These technologies ought to make seasteading and space colonization far cheaper and easier to do by small, self-interested groups.

It is the capability of small groups or even individuals to do what can currently only be done by governments or large corporations that is of most interest to me. I believe very strongly that the preservation of individual liberties (and the elimination of rent-seeking parasitism) is totally dependent on the continuation of this trend. I believe that our future (those of us who seek openness and freedom) absolutely requires that we make this trend continue.

We may get AI by mid-century. However, if so, I think it will be very different from human brains. I regard uploading as a fantasy. The promulgators of uploading ignore much of neuro-biology. I think its possible to store someone's identity inactively in computer memory, sort of like the digital equivalent to cryonic suspension. However, I don't think it will be possible to express such individual actively as software.

Lastly, yes I think cryonics can work and do recommend it for anyone who is unlikely to make it to the time SENS becomes available (2040??).

www.alcor.org

I'm more of an 80's style transhumanist, which is described on NBF as the "mundane" singularity. I expect SENS and other forms of radical life extension to be available in the coming decades, along with synthetic biology and bio-engineering in general. 3D printing/additive manufacturing will also progress during this time. Same for robotics and general automation. The wild cards, which are looking increasingly likely, are fusion (plasma and LENR) and the Woodward-Mach thing. These technologies ought to make seasteading and space colonization far cheaper and easier to do by small, self-interested groups.

It is the capability of small groups or even individuals to do what can currently only be done by governments or large corporations that is of most interest to me. I believe very strongly that the preservation of individual liberties (and the elimination of rent-seeking parasitism) is totally dependent on the continuation of this trend. I believe that our future (those of us who seek openness and freedom) absolutely requires that we make this trend continue.

We may get AI by mid-century. However, if so, I think it will be very different from human brains. I regard uploading as a fantasy. The promulgators of uploading ignore much of neuro-biology. I think its possible to store someone's identity inactively in computer memory, sort of like the digital equivalent to cryonic suspension. However, I don't think it will be possible to express such individual actively as software.

Lastly, yes I think cryonics can work and do recommend it for anyone who is unlikely to make it to the time SENS becomes available (2040??).

www.alcor.org

As an finished product? Because I also don't expect to see Cryogenic Resuscitation in the next 50 years. Crygenic Freezing however...kurt9 wrote:...AI and uploading. These are theoretically possible. But I don't expect to see them in the next 50 years.

I'm puzzled by your statement.kurt9 wrote:I regard uploading as a fantasy. The promulgators of uploading ignore much of neuro-biology. I think its possible to store someone's identity inactively in computer memory, sort of like the digital equivalent to cryonic suspension. However, I don't think it will be possible to express such individual actively as software.

You mentioned it was possible in theory, and you now mention it is a fantasy.

Like cryonic suspension, mind upload TODAY can be done under the same pretence as cryonics, where we assume the Future(tm) will contain the technology to take the second step.

And yes, it ignores alot about neuro-biology.

But personally I think (like cryonics) that a couple of gaps may be filled in a later stage. The gamble here is (and a gamble that needs to be made) is that the data we CAN retreive from the brain, might be enough to create a working model in a later stage.

This is my sentiment as well for neuro-imaging.kurt9 wrote:Lastly, yes I think cryonics can work and do recommend it for anyone who is unlikely to make it to the time SENS becomes available (2040??).

www.alcor.org

I don't expect reanimation from cryonics in 50 years either. I do it will be possible by, say, mid 22nd century.vernes wrote:As an finished product? Because I also don't expect to see Cryogenic Resuscitation in the next 50 years. Crygenic Freezing however...

I'm puzzled by your statement.

You mentioned it was possible in theory, and you now mention it is a fantasy.

My comment about uploading being a fantasy is that I do not uploading as active entity to be possible for a few centuries at least. By it being theoretically possible, I meant that it does not violate any laws of physics and that it could be possible in say, a 1000 or 10,000 years. I think it will be possible to scan someone's brain, with molecular or sub-cellular level resolution, and store that information inactively in computer memory within the next 30-40 years. However, reanimation will involve in producing a new body (and mind) probably based on synthetic biology and configuring the brain's synapes to match that of the computer memory. This is what I expect in the future.

kurt9 wrote:It is the capability of small groups or even individuals to do what can currently only be done by governments or large corporations that is of most interest to me. I believe very strongly that the preservation of individual liberties (and the elimination of rent-seeking parasitism) is totally dependent on the continuation of this trend. I believe that our future (those of us who seek openness and freedom) absolutely requires that we make this trend continue.

I agree.

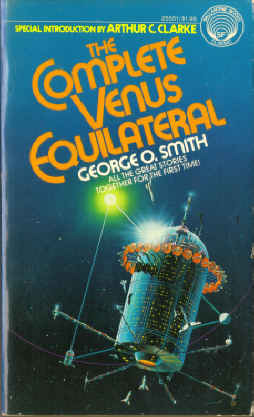

Something similar to what you describe occurs in the book "The Complete Venus Equilateral. " They turn a martian matter transmitter into a matter replicator, and it makes owning anything as simple as getting a copy of it's recording. (Written in 1942-1945. Today we would call it a file.)

It DOES mean freedom for mankind. Freedom from material want at least.

Anyways, great book.

More centrally to your point, making the ability to produce materials and products ubiquitous will certainly allay part of the problem with depending on sole source producers or central governmental intervention.

Teach a man to fish... and you make him independent.

‘What all the wise men promised has not happened, and what all the damned fools said would happen has come to pass.’

— Lord Melbourne —

— Lord Melbourne —

That deserves a post in the "Skynet is coming" thread. The machines are beginning to realize that economics is more effective than nukes.choff wrote:Was looking at a book called 'Dark Pools' about A.I. and the stock market. Seems intelligent programs are getting very good at stock picks, the down side is the next crash could be caused by it. The programs literally prey on each other.